|

|

@@ -2,7 +2,8 @@

|

|

|

|

|

|

\usepackage[english]{babel}

|

|

|

\usepackage[utf8]{inputenc}

|

|

|

-\usepackage{hyperref,graphicx,float}

|

|

|

+\usepackage{hyperref,graphicx,float,tikz}

|

|

|

+\usetikzlibrary{shapes,arrows}

|

|

|

|

|

|

% Link colors

|

|

|

\hypersetup{colorlinks=true,linkcolor=black,urlcolor=blue,citecolor=DarkGreen}

|

|

|

@@ -41,8 +42,8 @@ these frameworks have no access to their multi-touch events.

|

|

|

|

|

|

% Aanleiding

|

|

|

This problem was observed during an attempt to create a multi-touch

|

|

|

-``interactor'' class for the Visualization Toolkit (VTK \cite{VTK}). Because

|

|

|

-VTK provides the application framework here, it is undesirable to use an entire

|

|

|

+``interactor'' class for the Visualization Toolkit \cite[VTK]{VTK}. Because VTK

|

|

|

+provides the application framework here, it is undesirable to use an entire

|

|

|

framework like Qt simultaneously only for its multi-touch support.

|

|

|

|

|

|

% Ruw doel

|

|

|

@@ -65,7 +66,6 @@ events.

|

|

|

% Deelvragen

|

|

|

To design such a mechanism properly, the following questions are relevant:

|

|

|

\begin{itemize}

|

|

|

- \item What are the requirements of the mechanism to be universal?

|

|

|

\item What is the input of the mechanism? Different touch drivers have

|

|

|

different API's. To be able to support different drivers (which is

|

|

|

highly desirable), there should probably be a translation from the

|

|

|

@@ -73,25 +73,33 @@ events.

|

|

|

\item How can extendability be accomplished? The set of supported events

|

|

|

should not be limited to a single implementation, but an application

|

|

|

should be able to define its own custom events.

|

|

|

+ \item How can the mechanism be used by different programming languages?

|

|

|

+ A universal mechanism should not be limited to be used in only one

|

|

|

+ language.

|

|

|

\item Can events be shared with multiple processes at the same time? For

|

|

|

example, a network implementation could run as a service instead of

|

|

|

within a single application, triggering events in any application that

|

|

|

needs them.

|

|

|

- \item Is performance an issue? For example, an event loop with rotation

|

|

|

- detection could swallow up more processing resources than desired.

|

|

|

+ % FIXME: gaan we nog wat doen met onderstaand?

|

|

|

+ %\item Is performance an issue? For example, an event loop with rotation

|

|

|

+ % detection could swallow up more processing resources than desired.

|

|

|

+ \item How can the mechanism be integrated in a VTK interactor?

|

|

|

\end{itemize}

|

|

|

|

|

|

% Afbakening

|

|

|

- The scope of this thesis includes the design of an multi-touch triggering

|

|

|

- mechanism, a reference implementation of this design, and its integration

|

|

|

- into a VTK interactor. To be successful, the design should allow for

|

|

|

- extensions to be added to any implementation. The reference implementation

|

|

|

- is a Proof of Concept that translates TUIO events to some simple touch

|

|

|

- gestures that are used by a VTK interactor.

|

|

|

+ The scope of this thesis includes the design of a universal multi-touch

|

|

|

+ triggering mechanism, a reference implementation of this design, and its

|

|

|

+ integration into a VTK interactor. To be successful, the design should

|

|

|

+ allow for extensions to be added to any implementation.

|

|

|

+

|

|

|

+ The reference implementation is a Proof of Concept that translates TUIO

|

|

|

+ events to some simple touch gestures that are used by a VTK interactor.

|

|

|

+ Being a Proof of Concept, the reference implementation itself does not

|

|

|

+ necessarily need to meet all the requirements of the design.

|

|

|

|

|

|

\section{Structure of this document}

|

|

|

|

|

|

- % TODO

|

|

|

+ % TODO: pas als het klaar is

|

|

|

|

|

|

\chapter{Related work}

|

|

|

|

|

|

@@ -134,7 +142,25 @@ events.

|

|

|

detected by different gesture trackers, thus separating gesture detection

|

|

|

code into maintainable parts.

|

|

|

|

|

|

+ \section{Processing implementation of simple gestures in Android}

|

|

|

+

|

|

|

+ An implementation of a detection mechanism for some simple multi-touch

|

|

|

+ gestures (tap, double tap, rotation, pinch and drag) using

|

|

|

+ Processing\footnote{Processing is a Java-based development environment with

|

|

|

+ an export possibility for Android. See also \url{http://processing.org/.}}

|

|

|

+ can be found found in a forum on the Processing website

|

|

|

+ \cite{processingMT}. The implementation is fairly simple, but it yields

|

|

|

+ some very appealing results. The detection logic of all gestures is

|

|

|

+ combined in a single class. This does not allow for extendability, because

|

|

|

+ the complexity of this class would increase to an undesirable level (as

|

|

|

+ predicted by the GART article \cite{GART}). However, the detection logic

|

|

|

+ itself is partially re-used in the reference implementation of the

|

|

|

+ universal gesture detection mechanism.

|

|

|

+

|

|

|

+\chapter{Preliminary}

|

|

|

+

|

|

|

\section{The TUIO protocol}

|

|

|

+ \label{sec:tuio}

|

|

|

|

|

|

The TUIO protocol \cite{TUIO} defines a way to geometrically describe

|

|

|

tangible objects, such as fingers or fiducials on a multi-touch table. The

|

|

|

@@ -142,15 +168,13 @@ events.

|

|

|

information is sent to the TUIO UDP port (3333 by default).

|

|

|

|

|

|

For efficiency reasons, the TUIO protocol is encoded using the Open Sound

|

|

|

- Control (OSC)\footnote{\url{http://opensoundcontrol.org/specification}}

|

|

|

- format. An OSC server/client implementation is available for Python:

|

|

|

- pyOSC\footnote{\url{https://trac.v2.nl/wiki/pyOSC}}.

|

|

|

+ Control \cite[OSC]{OSC} format. An OSC server/client implementation is

|

|

|

+ available for Python: pyOSC \cite{pyOSC}.

|

|

|

|

|

|

- A Python implementation of the TUIO protocol also exists:

|

|

|

- pyTUIO\footnote{\url{http://code.google.com/p/pytuio/}}. However, the

|

|

|

- execution of an example script yields an error regarding Python's built-in

|

|

|

- \texttt{socket} library. Therefore, the reference implementation uses the

|

|

|

- pyOSC package to receive TUIO messages.

|

|

|

+ A Python implementation of the TUIO protocol also exists: pyTUIO

|

|

|

+ \cite{pyTUIO}. However, the execution of an example script yields an error

|

|

|

+ regarding Python's built-in \texttt{socket} library. Therefore, the

|

|

|

+ reference implementation uses the pyOSC package to receive TUIO messages.

|

|

|

|

|

|

The two most important message types of the protocol are ALIVE and SET

|

|

|

messages. An ALIVE message contains the list of session id's that are

|

|

|

@@ -188,26 +212,10 @@ events.

|

|

|

scale these values back to the actual screen dimension.

|

|

|

\end{quote}

|

|

|

|

|

|

- In other words, the design of the gesture detection mechanism should

|

|

|

- incorporate a translation from driver-specific coordinates to pixel

|

|

|

- coordinates.

|

|

|

-

|

|

|

- \section{Processing implementation of simple gestures in Android}

|

|

|

+ \section{The Visualization Toolkit}

|

|

|

+ \label{sec:vtk}

|

|

|

|

|

|

- An implementation of a detection mechanism for some simple multi-touch

|

|

|

- gestures (tap, double tap, rotation, pinch and drag) using

|

|

|

- Processing\footnote{Processing is a Java-based development environment with

|

|

|

- an export possibility for Android. See also \url{http://processing.org/.}}

|

|

|

- can be found found in a forum on the Processing website

|

|

|

- \cite{processingMT}. The implementation is fairly simple, but it yields

|

|

|

- some very appealing results. The detection logic of all gestures is

|

|

|

- combined in a single class. This does not allow for extendability, because

|

|

|

- the complexity of this class would increase to an undesirable level (as

|

|

|

- predicted by the GART article \cite{GART}). However, the detection logic

|

|

|

- itself is partially re-used in the reference implementation of the

|

|

|

- universal gesture detection mechanism.

|

|

|

-

|

|

|

-% TODO

|

|

|

+ % TODO

|

|

|

|

|

|

\chapter{Experiments}

|

|

|

|

|

|

@@ -227,6 +235,71 @@ events.

|

|

|

|

|

|

% Proof of Concept: VTK interactor

|

|

|

|

|

|

+ \section{Experimenting with TUIO and event bindings}

|

|

|

+ \label{sec:experimental-draw}

|

|

|

+

|

|

|

+ When designing a software library, its API should be understandable and

|

|

|

+ easy to use for programmers. To find out the basic requirements of the API

|

|

|

+ to be usable, an experimental program has been written based on the

|

|

|

+ Processing code from \cite{processingMT}. The program receives TUIO events

|

|

|

+ and translates them to point \emph{down}, \emph{move} and \emph{up} events.

|

|

|

+ These events are then interpreted to be (double or single) \emph{tap},

|

|

|

+ \emph{rotation} or \emph{pinch} gestures. A simple drawing program then

|

|

|

+ draws the current state to the screen using the PyGame library. The output

|

|

|

+ of the program can be seen in figure \ref{fig:draw}.

|

|

|

+

|

|

|

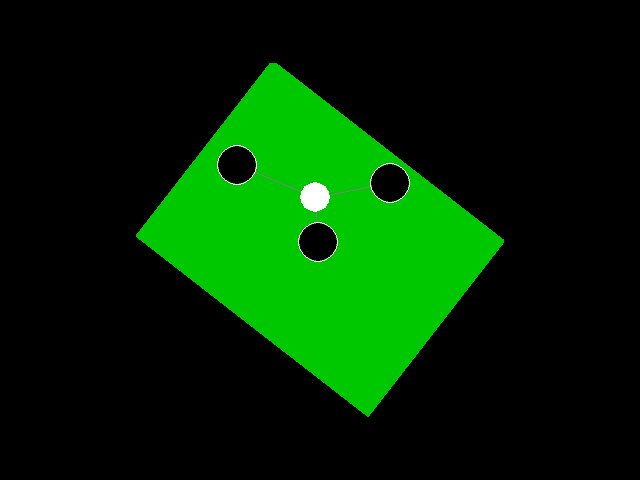

+ \begin{figure}[H]

|

|

|

+ \center

|

|

|

+ \label{fig:draw}

|

|

|

+ \includegraphics[scale=0.4]{data/experimental_draw.png}

|

|

|

+ \caption{Output of the experimental drawing program. It draws the touch

|

|

|

+ points and their centroid on the screen (the centroid is used

|

|

|

+ as center point for rotation and pinch detection). It also

|

|

|

+ draws a green rectangle which responds to rotation and pinch

|

|

|

+ events.}

|

|

|

+ \end{figure}

|

|

|

+

|

|

|

+ One of the first observations is the fact that TUIO's \texttt{SET} messages

|

|

|

+ use the TUIO coordinate system, as described in section \ref{sec:tuio}.

|

|

|

+ The test program multiplies these with its own dimensions, thus showing the

|

|

|

+ entire screen in its window. Also, the implementation only works using the

|

|

|

+ TUIO protocol. Other drivers are not supported.

|

|

|

+

|

|

|

+ Though using relatively simple math, the rotation and pinch events work

|

|

|

+ surprisingly well. Both rotation and pinch use the centroid of all touch

|

|

|

+ points. A \emph{rotation} gesture uses the difference in angle relative to

|

|

|

+ the centroid of all touch points, and \emph{pinch} uses the difference in

|

|

|

+ distance. Both values are normalized using division by the number of touch

|

|

|

+ points. A pinch event contains a scale factor, and therefore uses a

|

|

|

+ division of the current by the previous average distance to the centroid.

|

|

|

+

|

|

|

+ There is a flaw in this implementation. Since the centroid is calculated

|

|

|

+ using all current touch points, there cannot be two or more rotation or

|

|

|

+ pinch gestures simultaneously. On a large multi-touch table, it is

|

|

|

+ desirable to support interaction with multiple hands, or multiple persons,

|

|

|

+ at the same time.

|

|

|

+

|

|

|

+ Also, the different detection algorithms are all implemented in the same

|

|

|

+ file, making it complex to read or debug, and difficult to extend.

|

|

|

+

|

|

|

+ \section{VTK interactor}

|

|

|

+

|

|

|

+ % TODO

|

|

|

+ % VTK heeft eigen pipeline, mechanisme moet daarnaast draaien

|

|

|

+

|

|

|

+ \section{Summary of observations}

|

|

|

+ \label{sec:observations}

|

|

|

+

|

|

|

+ \begin{itemize}

|

|

|

+ \item The TUIO protocol uses a distinctive coordinate system and set of

|

|

|

+ messages.

|

|

|

+ \item Touch events occur outside of the application window.

|

|

|

+ \item Gestures that use multiple touch points are using all touch

|

|

|

+ points (not a subset of them).

|

|

|

+ \item Code complexity increases when detection algorithms are added.

|

|

|

+ \item % TODO: VTK interactor observations

|

|

|

+ \end{itemize}

|

|

|

+

|

|

|

% -------

|

|

|

% Results

|

|

|

% -------

|

|

|

@@ -234,49 +307,176 @@ events.

|

|

|

\chapter{Design}

|

|

|

|

|

|

\section{Requirements}

|

|

|

+ \label{sec:requirements}

|

|

|

|

|

|

- % TODO

|

|

|

- % ondersteunen van meerdere drivers

|

|

|

- % gesture detectie koppelen aan bepaald gedeelte van het scherm

|

|

|

- % scheiden van detectiecode voor verschillende gesture types

|

|

|

- % eventueel te gebruiken in meerdere talen

|

|

|

-

|

|

|

- \section{Input server}

|

|

|

+ From the observations in section \ref{sec:observations}, a number of

|

|

|

+ requirements can be specified for the design of the event mechanism:

|

|

|

|

|

|

- % TODO

|

|

|

- % vertaling driver naar point down, move, up

|

|

|

- % TUIO in reference implementation

|

|

|

-

|

|

|

- \section{Gesture server}

|

|

|

-

|

|

|

- % TODO

|

|

|

- % vertaling naar pixelcoordinaten

|

|

|

- % toewijzing aan windows

|

|

|

+ \begin{itemize}

|

|

|

+ % vertalen driver-specifieke events naar algemeen formaat

|

|

|

+ \item To be able to support multiple input drivers, there must be a

|

|

|

+ translation from driver-specific messages to some common format

|

|

|

+ that can be used in gesture detection algorithms.

|

|

|

+ % events toewijzen aan GUI window (windows)

|

|

|

+ \item An application GUI window should be able to receive only events

|

|

|

+ occuring within that window, and not outside of it.

|

|

|

+ % scheiden groepen touchpoints voor verschillende gestures (windows)

|

|

|

+ \item To support multiple objects that are performing different

|

|

|

+ gestures at the same time, the mechanism must be able to perform

|

|

|

+ gesture detection on a subset of the active touch points.

|

|

|

+ % scheiden van detectiecode voor verschillende gesture types

|

|

|

+ \item To avoid an increase in code complexity when adding new detection

|

|

|

+ algorithms, detection code of different gesture types must be

|

|

|

+ separated.

|

|

|

+ \end{itemize}

|

|

|

|

|

|

- \section{Windows}

|

|

|

+ \section{Components}

|

|

|

+

|

|

|

+ Based on the requirements from section \ref{sec:requirements}, a design

|

|

|

+ for the mechanism has been created. The design consists of a number of

|

|

|

+ components, each having a specific set of tasks.

|

|

|

+

|

|

|

+ \subsection{Event server}

|

|

|

+

|

|

|

+ % vertaling driver naar point down, move, up

|

|

|

+ % vertaling naar schermpixelcoordinaten

|

|

|

+ % TUIO in reference implementation

|

|

|

+

|

|

|

+ The \emph{event server} is an abstraction for driver-specific server

|

|

|

+ implementations, such as a TUIO server. It receives driver-specific

|

|

|

+ messages and tanslates these to a common set of events and a common

|

|

|

+ coordinate system.

|

|

|

+

|

|

|

+ A minimal example of a common set of events is $\{point\_down,

|

|

|

+ point\_move, point\_up\}$. This is the set used by the reference

|

|

|

+ implementation. Respectively, these events represent an object being

|

|

|

+ placed on the screen, moving along the surface of the screen, and being

|

|

|

+ released from the screen.

|

|

|

+

|

|

|

+ A more extended set could also contain the same three events for a

|

|

|

+ surface touching the screen. However, a surface can have a rotational

|

|

|

+ property, like the ``fiducials'' type in the TUIO protocol. This

|

|

|

+ results in as $\{point\_down, point\_move, point\_up, surface\_down,

|

|

|

+ surface\_move, surface\_up,\\surface\_rotate\}$.

|

|

|

+

|

|

|

+ An important note here, is that similar events triggered by different

|

|

|

+ event servers must have the same event type and parameters. In other

|

|

|

+ words, the output of the event servers should be determined by the

|

|

|

+ gesture servers (not the contrary).

|

|

|

+

|

|

|

+ The output of an event server implementation should also use a common

|

|

|

+ coordinate system, that is the coordinate system used by the gesture

|

|

|

+ server. For example, the reference implementation uses screen

|

|

|

+ coordinates in pixels, where (0, 0) is the upper left corner of the

|

|

|

+ screen.

|

|

|

+

|

|

|

+ The abstract class definition of the event server should provide some

|

|

|

+ functionality to detect which driver-specific event server

|

|

|

+ implementation should be used.

|

|

|

+

|

|

|

+ \subsection{Gesture trackers}

|

|

|

+

|

|

|

+ A \emph{gesture tracker} detects a single gesture type, given a set of

|

|

|

+ touch points. If one group of points on the screen is assigned to one

|

|

|

+ tracker and another group to another tracker, multiple gestures, an be

|

|

|

+ detected at the same time. For this assignment, the mechanism uses

|

|

|

+ windows. These will be described in the next section.

|

|

|

+

|

|

|

+ % event binding/triggering

|

|

|

+ A gesture tracker triggers a gesture event by executing a callback.

|

|

|

+ Callbacks are ``bound'' to a tracker by the application. Because

|

|

|

+ multiple gesture types can have very similar detection algorithm, a

|

|

|

+ tracker can detect multiple different types of gestures. For instance,

|

|

|

+ the rotation and pinch gestures from the experimental program in

|

|

|

+ section \ref{sec:experimental-draw} both use the centroid of all touch

|

|

|

+ points.

|

|

|

+

|

|

|

+ If no callback is bound for a particular gesture type, no detection of

|

|

|

+ that type is needed. A tracker implementation can use this knowledge

|

|

|

+ for code optimization.

|

|

|

+

|

|

|

+ % scheiding algoritmiek

|

|

|

+ A tracker implementation defines the gesture types it can trigger, and

|

|

|

+ the detection algorithms to trigger them. Consequently, detection

|

|

|

+ algorithms can be separated in different trackers. Different

|

|

|

+ trackers can be saved in different files, reducing the complexity of

|

|

|

+ the code in a single file. \\

|

|

|

+ % extendability

|

|

|

+ Because tacker defines its own set of gesture types, the application

|

|

|

+ developer can define application-specific trackers (by extending a base

|

|

|

+ \texttt{GestureTracker} class, for example). In fact, any built-in

|

|

|

+ gesture trackers of an implementation are also created this way. This

|

|

|

+ allows for a plugin-like way of programming, which is very desirable if

|

|

|

+ someone would want to build a library of gesture trackers. Such a

|

|

|

+ library can easy be extended by others.

|

|

|

+

|

|

|

+ \subsection{Windows}

|

|

|

+

|

|

|

+ A \emph{window} represents a subset of the entire screen surface. The

|

|

|

+ goal of a window is to restrict the detection of certain gestures to

|

|

|

+ certain areas. A window contains a list of touch points, and a list of

|

|

|

+ trackers. A window server (defined in the next section) assigns touch

|

|

|

+ points to a window, but the window itself defines functionality to

|

|

|

+ check whether a touch point is inside the window. This way, new windows

|

|

|

+ can be defined to fit over any 2D object used by the application.

|

|

|

+

|

|

|

+ The first and most obvious use of a window is to restrict touch events

|

|

|

+ to a single application window. However, the use of windows can be used

|

|

|

+ in a lot more powerful way.

|

|

|

+

|

|

|

+ For example, an application contains an image with a transparent

|

|

|

+ background that can be dragged around. The user can only drag the image

|

|

|

+ by touching its foreground. To accomplish this, the application

|

|

|

+ programmer can define a window type that uses a bitmap to determine

|

|

|

+ whether a touch point is on the visible image surface. The tracker

|

|

|

+ which detects drag gestures is then bound to this window, limiting the

|

|

|

+ occurence of drag events to the image surface.

|

|

|

+

|

|

|

+ % toewijzen even aan deel v/h scherm:

|

|

|

+ % TUIO coördinaten zijn over het hele scherm en van 0.0 tot 1.0, dus

|

|

|

+ % moeten worden vertaald naar pixelcoördinaten binnen een ``window''

|

|

|

+ % TODO

|

|

|

+

|

|

|

+ \subsection{Gesture server}

|

|

|

+

|

|

|

+ % luistert naar point down, move, up

|

|

|

+ The \emph{gesture server} delegates events from the event server to the

|

|

|

+ set of windows that contain the touch points related to the events.

|

|

|

+

|

|

|

+ % toewijzing point (down) aan window(s)

|

|

|

+ The gesture server contains a list of windows. When the event server

|

|

|

+ triggers an event, the gesture server ``asks'' each window whether it

|

|

|

+ contains the related touch point. If so, the window updates its gesture

|

|

|

+ trackers, which can then trigger gestures.

|

|

|

+

|

|

|

+ \section{Diagram of component relations}

|

|

|

+

|

|

|

+ \begin{figure}[H]

|

|

|

+ \input{data/diagram}

|

|

|

+ % TODO: caption

|

|

|

+ \end{figure}

|

|

|

+

|

|

|

+ \section{Example usage}

|

|

|

|

|

|

% TODO

|

|

|

- % toewijzen even aan deel v/h scherm:

|

|

|

- % TUIO coördinaten zijn over het hele scherm en van 0.0 tot 1.0, dus moeten

|

|

|

- % worden vertaald naar pixelcoördinaten binnen een ``window''

|

|

|

+ % vertellen hoe je tracker aanmaakt, binnen een window

|

|

|

|

|

|

- \section{Trackers}

|

|

|

+ %\section{Network protocol}

|

|

|

|

|

|

% TODO

|

|

|

- % event binding/triggering

|

|

|

- % extendability

|

|

|

-

|

|

|

-% TODO: link naar appendix met schema

|

|

|

+ % ZeroMQ gebruiken voor communicatie tussen meerdere processen (in

|

|

|

+ % verschillende talen)

|

|

|

|

|

|

\chapter{Reference implementation}

|

|

|

|

|

|

% TODO

|

|

|

+% alleen window.contains op point down, niet move/up

|

|

|

|

|

|

\chapter{Integration in VTK}

|

|

|

|

|

|

% VTK interactor

|

|

|

|

|

|

-\chapter{Conclusions}

|

|

|

+%\chapter{Conclusions}

|

|

|

|

|

|

% TODO

|

|

|

% Windows zijn een manier om globale events toe te wijzen aan vensters

|

|

|

@@ -286,27 +486,17 @@ events.

|

|

|

\chapter{Suggestions for future work}

|

|

|

|

|

|

% TODO

|

|

|

-% Network protocol (ZeroMQ)

|

|

|

-% State machine

|

|

|

|

|

|

-\bibliographystyle{plain}

|

|

|

-\bibliography{report}{}

|

|

|

+% Network protocol (ZeroMQ) voor meerdere talen en simultane processen

|

|

|

+% Hierij ook: extra laag die gesture windows aanmaakt die corresponderen met window manager

|

|

|

|

|

|

-\appendix

|

|

|

-

|

|

|

-\chapter{Diagram of mechanism structure}

|

|

|

-\label{app:schema}

|

|

|

+% State machine

|

|

|

|

|

|

-\begin{figure}[H]

|

|

|

- \hspace{-14em}

|

|

|

- \includegraphics{data/server_scheme.pdf}

|

|

|

- \caption{}

|

|

|

- %TODO: caption

|

|

|

-\end{figure}

|

|

|

+% Window in boomstructuur voor efficientie

|

|

|

|

|

|

-\chapter{Supported events in reference implementation}

|

|

|

-\label{app:supported-events}

|

|

|

+\bibliographystyle{plain}

|

|

|

+\bibliography{report}{}

|

|

|

|

|

|

-% TODO

|

|

|

+%\appendix

|

|

|

|

|

|

\end{document}

|